The History of AI, Part 2: The Language Revolution (2018–2020)

TL;DR: „BERT and GPT showed two paths – but both proved: machines can understand and generate language."

— Till FreitagThe Transformer Architecture Unleashed

After the Transformer architecture was introduced in 2017, a race began. Two approaches emerged – and both fundamentally changed the AI world.

2018: BERT – Google Understands Context

In October 2018, Google released BERT (Bidirectional Encoder Representations from Transformers). The trick: BERT reads text in both directions simultaneously and thereby understands context better than anything before it.

An Example

The sentence: "I went to the bank to deposit my check."

- Before: Models struggled with whether "bank" meant a financial institution or a river bank

- BERT: Understands through context ("deposit," "check") that it's about a financial institution

Google integrated BERT directly into Search – the biggest algorithm leap in years. Suddenly Google understood what you mean, not just what you type.

2019: GPT-2 – "Too Dangerous to Release"

OpenAI released GPT-2 in February 2019 – but only partially. They initially held back the full model, reasoning: too dangerous for the public. The fear: mass-generated fake content.

GPT-2 could write astonishingly coherent texts. Entire news articles, stories, even simple programming tasks. 1.5 billion parameters – unimaginably large at the time.

The Debate Begins

The GPT-2 controversy marked the beginning of a discussion that continues to this day:

- Safety vs. Openness – Who decides what's "too dangerous"?

- Dual Use – Every AI capability can be useful or harmful

- Developer Responsibility – OpenAI became the center of this debate

2020: GPT-3 – The Paradigm Shift

In June 2020, GPT-3 appeared with 175 billion parameters – over 100x larger than GPT-2. And suddenly it became clear: scaling alone produces emergent capabilities.

GPT-3 could do things that nobody had explicitly trained it to do:

- Write programming code

- Translate between languages

- Solve mathematical problems

- Compose creative texts in various styles

- Learn from just a few examples (few-shot learning)

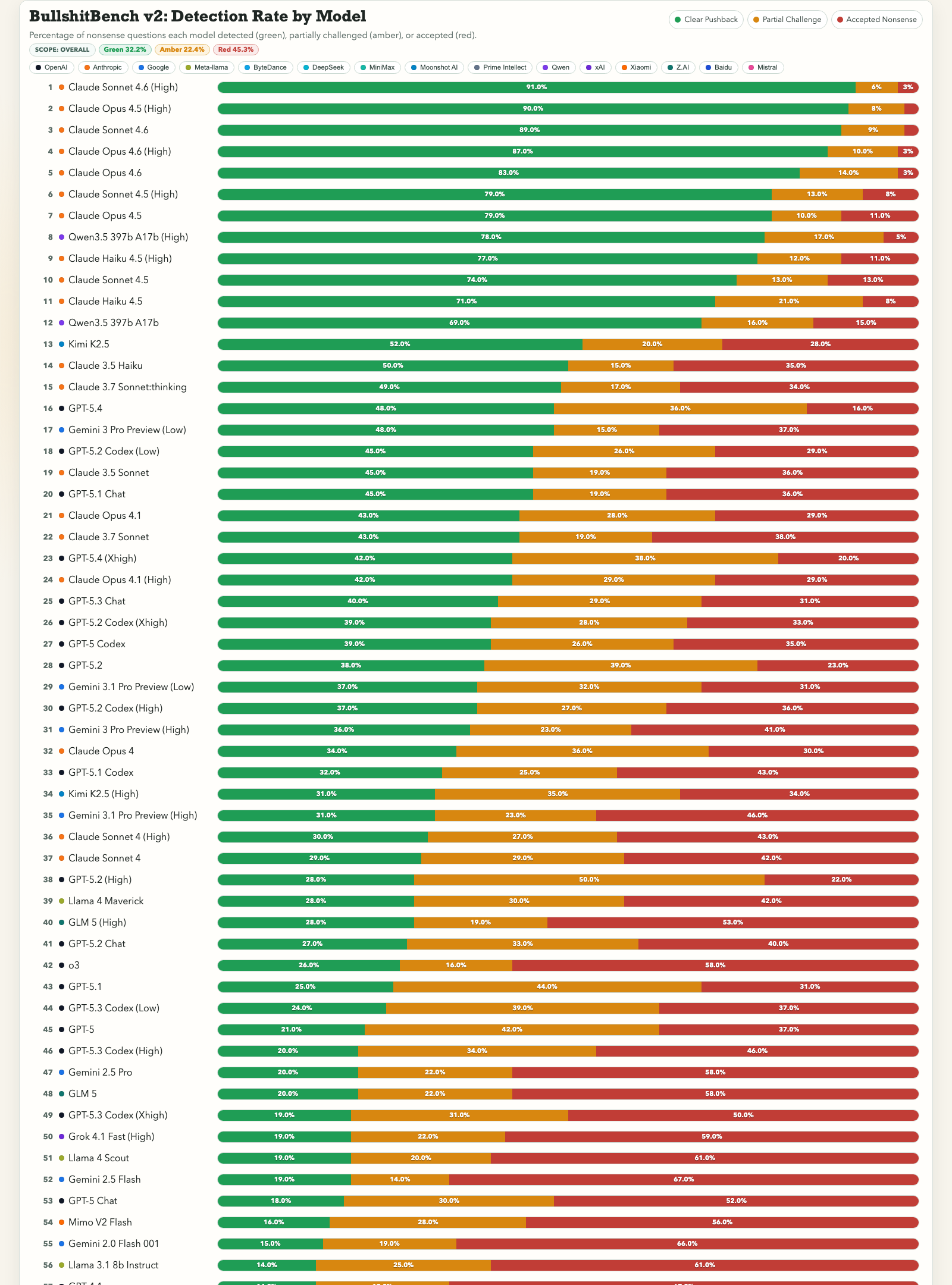

The Scaling Hypothesis

| Model | Parameters | Year | Capabilities |

|---|---|---|---|

| GPT-1 | 117M | 2018 | Simple text completion |

| GPT-2 | 1.5B | 2019 | Coherent paragraphs |

| GPT-3 | 175B | 2020 | Code, translation, reasoning |

The message was clear: More parameters = more capabilities. The so-called scaling hypothesis became the driving force of the entire industry.

GitHub Copilot – AI Becomes a Tool

At the end of 2020, development of GitHub Copilot began, based on GPT-3 (later Codex). For the first time, a large language model was directly integrated into a product that millions of people use daily.

Copilot showed: AI is no longer a future concept. It sits in your editor and writes code with you.

What We Learn from This Era

The years 2018–2020 brought three fundamental insights:

- Language is the key – Whoever masters language can master almost anything

- Scaling works – Larger models can do qualitatively new things

- AI becomes product – From research to everyday work

But the truly big things were still to come.

Continue with Part 3: The ChatGPT Moment – AI Reaches the World (2022–2023)