make.com Performance & Operations Optimization: Bundle Size, Filters, Aggregators (2026)

TL;DR: „Performance in Make.com is an operations question: filter as early as possible, keep bundles minimal, use aggregators instead of loops, and use sub-scenarios for reuse and parallelization – this cuts costs by 40–70% and runtimes often by a factor of 5."

— Till FreitagWhy Performance in Make.com Equals Money

Unlike n8n (self-hosted) or your own worker, in Make you pay for every executed operation. A scenario processing 10,000 bundles per day that needs 8 ops per bundle instead of 4 will cost you double at the end of the month – without anyone getting more output.

Performance optimization in Make is therefore not cosmetic – it's directly visible cost engineering. And it improves runtime, stability, and maintainability as a bonus.

The Five Levers at a Glance

| Lever | Effect on operations | Effect on runtime | Effort |

|---|---|---|---|

| Filter early | ⬇⬇⬇ | ⬇⬇ | Low |

| Reduce bundle size | ⬇⬇ | ⬇⬇⬇ | Medium |

| Aggregator instead of loop | ⬇⬇ | ⬇⬇ | Medium |

| Sub-scenarios & parallelization | ⬇ | ⬇⬇⬇ | High |

| Caching via data stores | ⬇⬇⬇ | ⬇⬇ | Medium |

The rest of this article walks through each lever with concrete patterns.

Lever 1: Filter as Early as Possible

Rule: Any bundle that's no longer needed after module N should have been dropped after module 1.

Anti-Pattern

Trigger: List Items (500 bundles)

→ Get additional data (500× API call)

→ Transform (500×)

→ Filter: only status = "active" (50 bundles left)

→ Write to monday (50×)Cost: 500 + 500 + 500 + 50 = 1,550 operations for 50 useful outputs.

Pattern

Trigger: List Items with server-side filter "status=active" (50 bundles)

→ Get additional data (50×)

→ Transform (50×)

→ Write to monday (50×)Cost: 50 + 50 + 50 + 50 = 200 operations. 7× savings.

Concretely That Means

- Filter server-side whenever the API allows (monday Items by Column Value, Airtable Filter Formula, HubSpot Search API with filter group)

- Make filters directly after the trigger, not just before the last module

- Router with filter instead of multiple parallel filter paths – decide once, then split

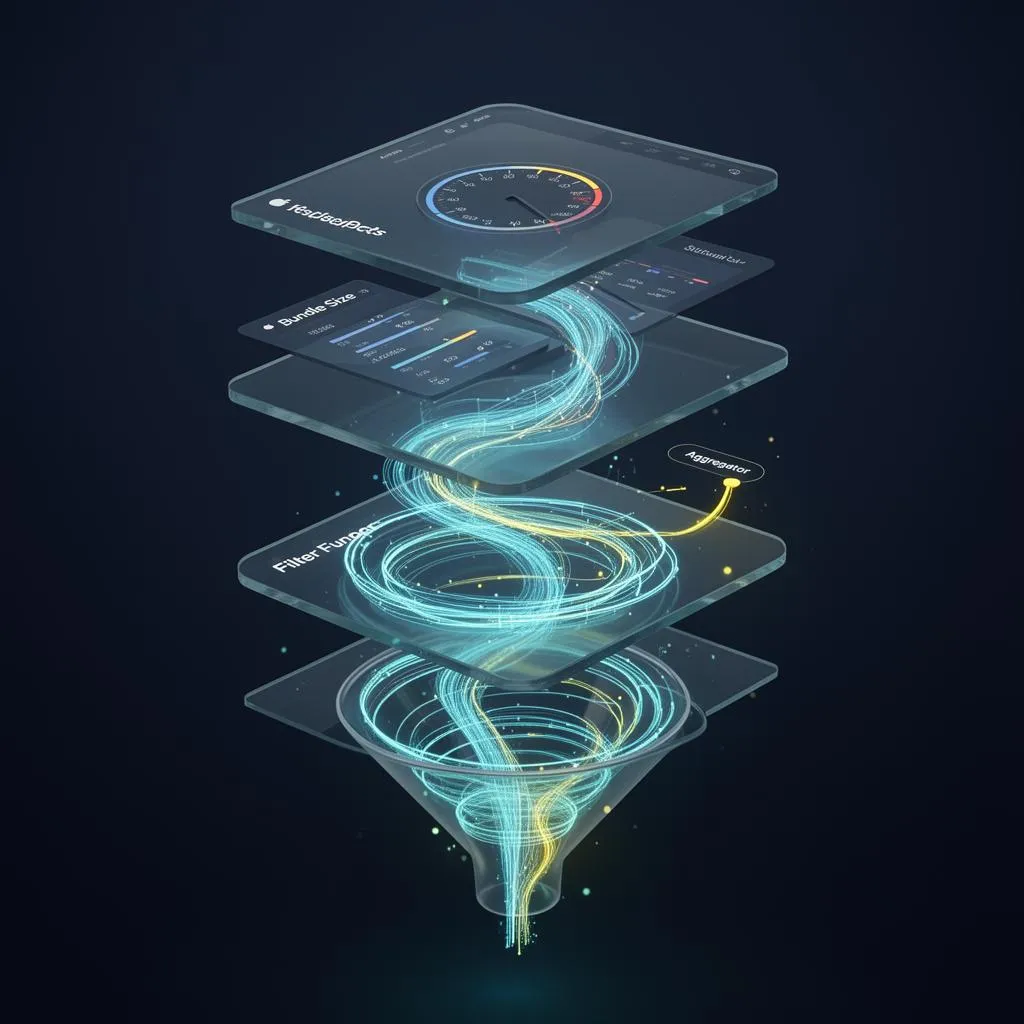

Lever 2: Actively Control Bundle Size

Every module transports a bundle. The bigger the bundle, the slower the processing – especially with JSON parsing, iterators, and HTTP requests.

Pattern: Set Variable with Whitelist

A Set Multiple Variables module right after the trigger that only forwards the fields actually needed:

{

"id": {{1.id}},

"email": {{1.contact.email}},

"amount": {{1.deal.amount}}

}Instead of dragging the full 50-field bundle through, the rest of the chain works with a lean 3-field object. Effects:

- HTTP modules are measurably faster

- Iterator/aggregator memory shrinks drastically

- You implicitly document which data is even used

Anti-Pattern: "Pass Through Everything"

Never dump the entire {{1.}} bundle into an HTTP body "because the backend will pick the right thing". That wastes bandwidth, bloats logs, and makes schema changes silently dangerous.

Lever 3: Aggregator Instead of Iterator + Loop

Iterators in Make multiply the operation count by the bundle count. With large lists that's deadly. Aggregators roll multiple bundles into one – and with them their API calls.

Anti-Pattern: 100× Single Inserts

Iterator (100 bundles)

→ HTTP POST /api/items (100× one item)Cost: 100 operations + 100 API calls + rate-limit risk.

Pattern: Array Aggregator → Bulk Insert

Iterator (100 bundles)

→ Array Aggregator (group all)

→ HTTP POST /api/items/bulk (1× 100 items)Cost: 1 operation for the bulk call. Plus: many APIs (monday, HubSpot, Notion, Airtable) provide bulk endpoints with significantly better rate-limit quotas.

Which Aggregator When?

| Aggregator | Use Case |

|---|---|

| Array Aggregator | Multiple bundles → one JSON array (bulk API calls) |

| Text Aggregator | Bundles → one text (Slack message, email body, markdown report) |

| Numeric Aggregator | Sums, averages, counts |

| Table Aggregator | CSV/HTML tables for emails or reports |

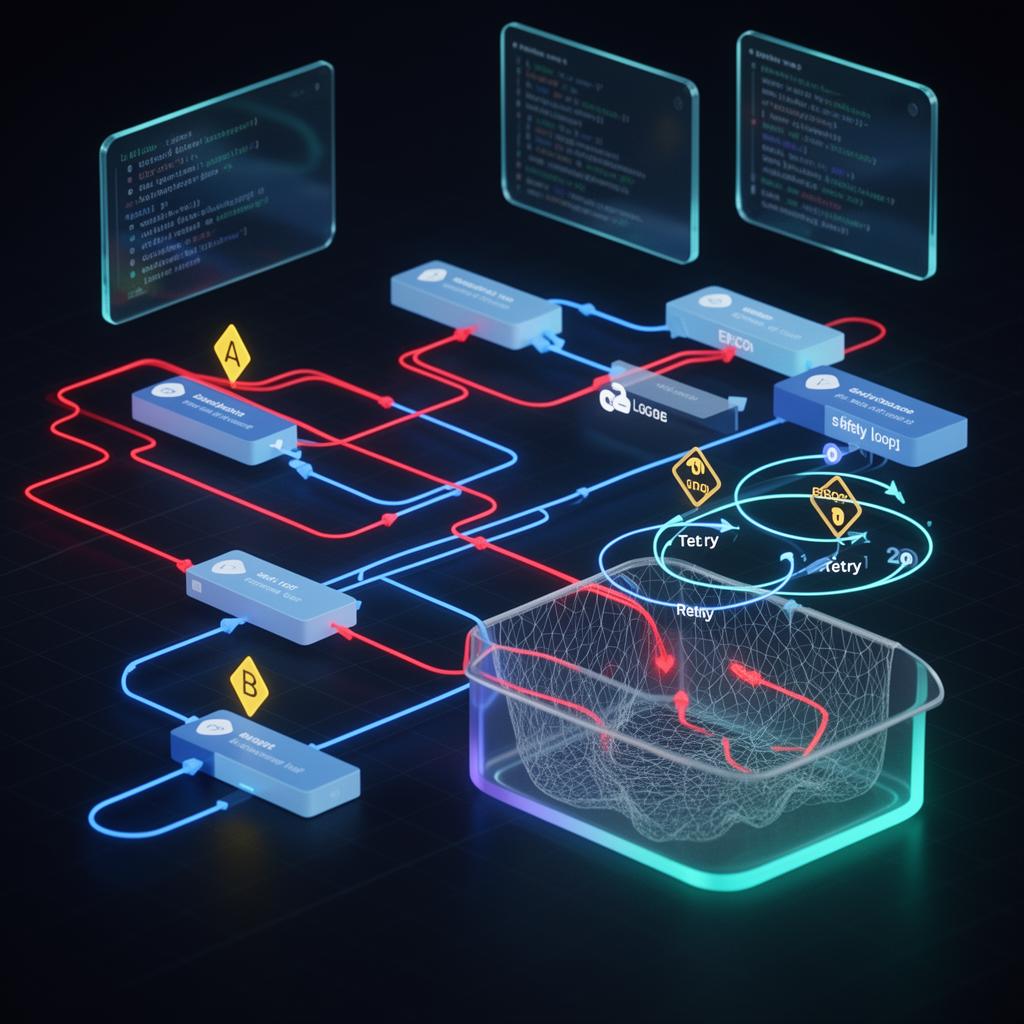

Lever 4: Sub-Scenarios & Parallelization

Sub-scenarios are to Make what functions are to code: reusable building blocks with a clear interface (input + output). They bring three performance advantages:

- Parallelization: Sub-scenarios run asynchronously in their own workers

- Granularity: You can scale or redeploy individual sub-scenarios

- Maintainability: A bug fix in one place applies everywhere

Pattern: Fan-Out with Sub-Scenarios

Main scenario:

Trigger → Iterator (1,000 bundles)

→ Make a call: "process-single-item" sub-scenario (async)

Sub-scenario "process-single-item":

Trigger: Webhook

→ Enrich → Validate → WriteInstead of serially processing 1,000 bundles in the main scenario, the main scenario fires 1,000 webhook calls and is done in seconds. The sub-scenarios run in parallel, capped by your operations plan limit.

⚠️ Watch out for target API rate limits. Parallelization often shifts the bottleneck from the Make worker to the target API. Plan throttling via

Sleepmodules or queue patterns.

Anti-Pattern: Monster Scenario

A single scenario with 40 modules, three routers, and two nested iterators is:

- Hard to test

- Hard to monitor

- Completely down on a single error

Rule of thumb: If a scenario has more than 15–20 modules, it should be split up.

Lever 5: Caching via Data Stores

Repeated API calls for rarely-changing data is pure operations burning. Make Data Stores work as a cheap cache.

Pattern: Lookup Cache for Master Data

1. Search Data Store: key = customer_id

2. Router:

- Branch A: If found → bundle has data, continue

- Branch B: If not → API call → Add to Data Store → continueThe first call for a customer_id costs one API call. All subsequent ones – until TTL expires – are a single data-store read. With 1,000 bundles and only 50 unique customers, this saves 950 API calls per run.

TTL & Invalidation

Data Stores don't have native TTL. Build it yourself:

- Store

cached_atas a field - Filter on lookup:

cached_at > now() - 24h - Periodic cleanup scenario that deletes old records

Performance Anti-Patterns at a Glance

❌ Filter at the very end instead of the start – multiplies operations 5–20×

❌ HTTP modules without timeout – a hung API can block an entire scenario and burn operations until the timeout

❌ Iterator → individual inserts – instead of array aggregator → bulk insert

❌ Dragging full bundles through all modules – instead of Set Variable with whitelist

❌ "Run scenario every minute" for polling – instead of webhook or trigger module

❌ Repeated API calls for the same master data – instead of data-store cache

❌ One mega-scenario – instead of cleanly cut sub-scenarios

Measurement & Optimization Workflow

Without measurement, optimization is gambling. Here's a structured approach:

1. Measure Baseline

- Operations per run: from Make history

- Runtime: difference

started_at/finished_at - Bundle count per module: visible in run detail

2. Identify Hotspots

Sort modules by operations consumption. Often 80% of operations live in 20% of modules – usually HTTP calls inside loops or missing early filters.

3. One Change per Iteration

Always optimize one variable, measure again, compare. Otherwise you don't know which lever did what.

4. Define an Operations Budget

For each critical scenario, set an operations budget per bundle (e.g. "Max 8 ops per lead"). As soon as a run is significantly above that, it's an alarm signal – usually something has changed in the API response or input data.

Complementary read: How to continuously monitor operations and runtime is covered in our Monitoring & Observability guide.

Performance Review Checklist

- Filters sit directly after trigger or data source

- Server-side filters used where API allows

- Set Variable with whitelist after trigger

- Iterators run through aggregators into bulk calls

- Bulk endpoints of target APIs used

- Sub-scenarios for reused logic

- Long loops parallelized via sub-scenario webhooks

- Repeated lookups cached via Data Stores

- HTTP modules have timeout & error routes

- Operations budget per scenario documented

- Monitoring on operations trend active

Related Guides

- make.com Automation – The Ultimate Guide

- Error Handling & Retry Strategies

- Monitoring & Observability for make.com

- Security & Secrets Management in make.com

- Make Module Migrator: monday.com V1 to V2

- n8n Best Practices Guide

We Tune Production Make Setups

As a make.com Certified Partner, we run performance audits for teams with high operations volume. Typical result: 40–70% operations savings while making execution faster and more stable.

Make Mastery Series

4 of 6 read · 67%Six articles that take you from first scenarios to production-grade, secure, and performant automations.

- PART 1Unread

make.com Automation – The Ultimate Guide

Intro, comparison with Zapier & n8n, 5 use cases.

Read - PART 2Read

Error Handling & Retry Strategies

Resume, Rollback, Commit, Break – with interactive decision tree.

Read - PART 3Read

Monitoring & Observability

Native dashboards + Better Stack heartbeats + Datadog deep dive.

Read - PART 4Read

Module Migrator: monday.com V1 → V2

Mandatory migration before May 1, 2026 – step-by-step guide.

Read - PART 5Unread

Security & Secrets Management

Connections, webhooks, IP whitelisting & vault patterns for production setups.

Read - PART 6ReadHere

Performance & Operations Optimization

Bundle size, filter order, aggregators & sub-scenarios – 40–70% fewer ops.

Reading progress is stored locally in your browser (localStorage).