Monitoring & Observability for make.com: Datadog, Better Stack & Native Tools (2026)

TL;DR: „Production-grade Make.com monitoring is three layers thick: L1 native history & notifications for your inbox, L2 Better Stack heartbeats for liveness, L3 Datadog (or Grafana) for logs, trends, and SLA reports. Anything without Layer 1 is negligent."

— Till FreitagWhy Make Monitoring Isn't Optional

A make.com scenario is infrastructure as soon as another team depends on it. The moment Sales, Support, or Finance counts on the workflow running, every unnoticed failure becomes a trust problem – no matter how elegant the architecture.

The catch: Make itself is generous but quiet about alerts. The standard notifications are enough for one or two active scenarios. Once you're in production, you need systematic monitoring – otherwise you'll only notice outages after they've already done damage.

The Three Monitoring Layers at a Glance

| Layer | Tool | What it shows | Setup effort |

|---|---|---|---|

| L1: Native | Make History, Notifications, Enhanced Error Monitoring | Which run failed, with which bundle | Low |

| L2: Liveness | Better Stack Heartbeats, Cronitor, healthchecks.io | Is the scenario even still running? | Medium |

| L3: Observability | Datadog, Grafana, Logtail | Trends, SLA, correlated logs across tools | High |

The layers are additive: L2 without L1 is blind, L3 without L1+L2 is overkill. Build them in this order.

Layer 1: Native Make Tools

Enhanced Error Monitoring Dashboard (2026)

Make rolled out the Enhanced Error Monitoring Dashboard in 2026. It's honestly good and often overlooked. It shows:

- Trend analysis per scenario (7 days, 30 days)

- Error rate as a percentage, not just a counter

- Top-5 error sources per scenario (module + error type)

- Comparison against baseline ("340% more errors than usual")

For most teams, this is the only dashboard they need to open in the morning. Pin it.

Configure Notifications Properly

Standard notifications are configurable per user and per scenario. Sensible defaults:

- On scenario stop: always on (mail + in-app)

- On "Incomplete Executions": on, once you have DLQ logic (otherwise spam)

- On "Operations limit reached": on for account owner

⚠️ Watch out for inbox spam: Without proper error handling, notifications quickly become noise. See our Error Handling guide.

Custom Webhook Notifications

Make can fire a webhook on error per scenario. That's the bridge to Layer 2 and 3:

Scenario Settings → Notifications → Webhook

URL: https://hooks.slack.com/services/... (or Better Stack / Datadog)

Trigger: On errorThe payload content is customizable – use that for structured logs (JSON with scenario_id, error_type, bundle_id, timestamp).

Layer 2: Better Stack Heartbeats

Native notifications tell you when something fails. They don't tell you when something isn't running at all. That's exactly what heartbeats are for.

The Concept

Instead of asking "Did the scenario throw an error?", you flip the logic: "Has the scenario reported in within the last 5 minutes?"

Setup with Better Stack

- In Better Stack, create a Heartbeat with an expected interval (e.g. "every 5 min")

- Better Stack gives you a heartbeat URL:

https://uptime.betterstack.com/api/v1/heartbeat/abc123 - In your Make scenario, append an HTTP module

GET https://uptime.betterstack.com/api/v1/heartbeat/abc123at the end - As long as the run completes, it pings Better Stack

- If the ping is missed → Better Stack alerts via Slack, SMS, phone call

When to Use

- Polling scenarios: "Pull Shopify orders every 15 minutes"

- Cron scenarios: "Send reports daily at 3:00 AM"

- Critical webhook consumers: where downtime is more expensive than sporadic errors

Anti-Pattern

❌ Heartbeat in the main path instead of at the end: If the HTTP module sits in the middle and a later module crashes, Better Stack still shows green.

❌ Heartbeat in the error path: Better to have a second HTTP module that pings a separate "error heartbeat" – or even better, a Datadog event.

Layer 3: Datadog (or Grafana) for Observability

If you're in the league of "multiple teams, multiple Make accounts, critical business processes", you need real observability. Datadog is the industry standard here; Grafana with Loki + Prometheus is the open-source alternative.

What You Can Correlate in Datadog

Make is just one module in the chain. A real "lead-to-welcome-email" flow goes like this:

Webform → Make → CRM API → Email tool → SendgridIf the lead still has no email three hours later, you want one timeline, not five separate dashboards. Datadog provides this via:

- Make logs (via webhook into the Datadog HTTP intake)

- CRM logs (HubSpot/Salesforce integration)

- Email logs (Sendgrid integration)

- Correlation ID in the bundle that travels through all systems

Setup Pattern: Make → Datadog

In the Make scenario, after each critical module, an HTTP module:

POST https://http-intake.logs.datadoghq.eu/api/v2/logs

Headers:

DD-API-KEY: {{datadog_api_key}}

Content-Type: application/json

Body:

{

"ddsource": "make.com",

"service": "lead-onboarding",

"scenario_id": "{{scenario.id}}",

"execution_id": "{{execution.id}}",

"correlation_id": "{{1.correlation_id}}",

"step": "crm_create",

"status": "success",

"duration_ms": 234,

"message": "Lead created in HubSpot"

}This lets you build dashboards, monitors, and anomaly detection on structured data in Datadog – no heuristics, no log regex.

Custom Metrics for SLA Reporting

Once logs are structured, you can derive metrics in Datadog:

make.scenario.runs.total– number of runs per scenariomake.scenario.runs.failed– number of failuresmake.scenario.duration_ms– p50/p95/p99 runtimemake.scenario.operations– operations per run

From that you build an SLA dashboard for management: "99.4% success rate over 30 days, p95 runtime 12 seconds." That's the language that works in the management meeting.

Alerting Strategy: Severity Tiers, Not Everything Is P0

Worst anti-pattern of them all: every error becomes a Slack alert. After three days, the team ignores the channel completely.

Recommended Tiers

| Tier | Trigger | Channel | Response time |

|---|---|---|---|

| P0 | Scenario completely down for > 15 min | PagerDuty / SMS / phone | immediate |

| P1 | Error rate > 5% over 10 min | Slack #alerts | < 30 min |

| P2 | Single errors in non-critical scenario | Daily digest email | < 1 day |

| P3 | Trend anomalies (e.g. "20% more operations than usual") | Weekly report | reactive |

Concrete Datadog Monitor Config

Monitor: Make Lead Onboarding Failure Rate

Type: Metric Monitor

Query: avg(last_10m):

sum:make.scenario.runs.failed{service:lead-onboarding} /

sum:make.scenario.runs.total{service:lead-onboarding} > 0.05

Notification:

- if status = ALERT → @pagerduty-make-team

- if status = WARN → @slack-make-alertsStructure Logs Instead of Parsing Them

Build logs as JSON from day one, not as free text. That saves hundreds of hours of regex pain later.

Recommended Fields

{

"timestamp": "2026-04-16T08:23:11Z",

"scenario_id": "12345",

"scenario_name": "lead-onboarding",

"execution_id": "exec_abc123",

"correlation_id": "lead_xyz789",

"step": "crm_create",

"status": "success",

"duration_ms": 234,

"operations_used": 3,

"error_type": null,

"error_message": null,

"bundle_id": "bundle_456"

}With correlation_id you can track a single lead across all systems. That's gold when debugging.

Production Monitoring Checklist

- Native notifications active for all production scenarios

- Enhanced Error Monitoring Dashboard pinned

- Heartbeats for all polling/cron scenarios (Better Stack or similar)

- Structured JSON logs to a central sink (Datadog/Grafana)

-

correlation_idflows through all bundles - SLA dashboard with p95 runtime & success rate per scenario

- Alerting sorted into severity tiers (P0–P3)

- Operations trend monitor (anomaly detection)

- Runbook linked per P0/P1 alert

- Quarterly review: which alerts are noise, which are missing?

Anti-Patterns at a Glance

❌ Native notifications only: You see errors but not silent downtime.

❌ All logs into one email inbox: Gets ignored, gives no trends.

❌ Heartbeats without escalation: A heartbeat fail without an alert is just a nice red dot on the dashboard.

❌ Every error = P0: Alert fatigue guaranteed.

❌ Logs without correlation ID: You'll be comparing timestamps for hours.

❌ No runbook: When the alert hits at 3 AM, no one knows what to do.

Related Guides

- make.com Automation – The Ultimate Guide

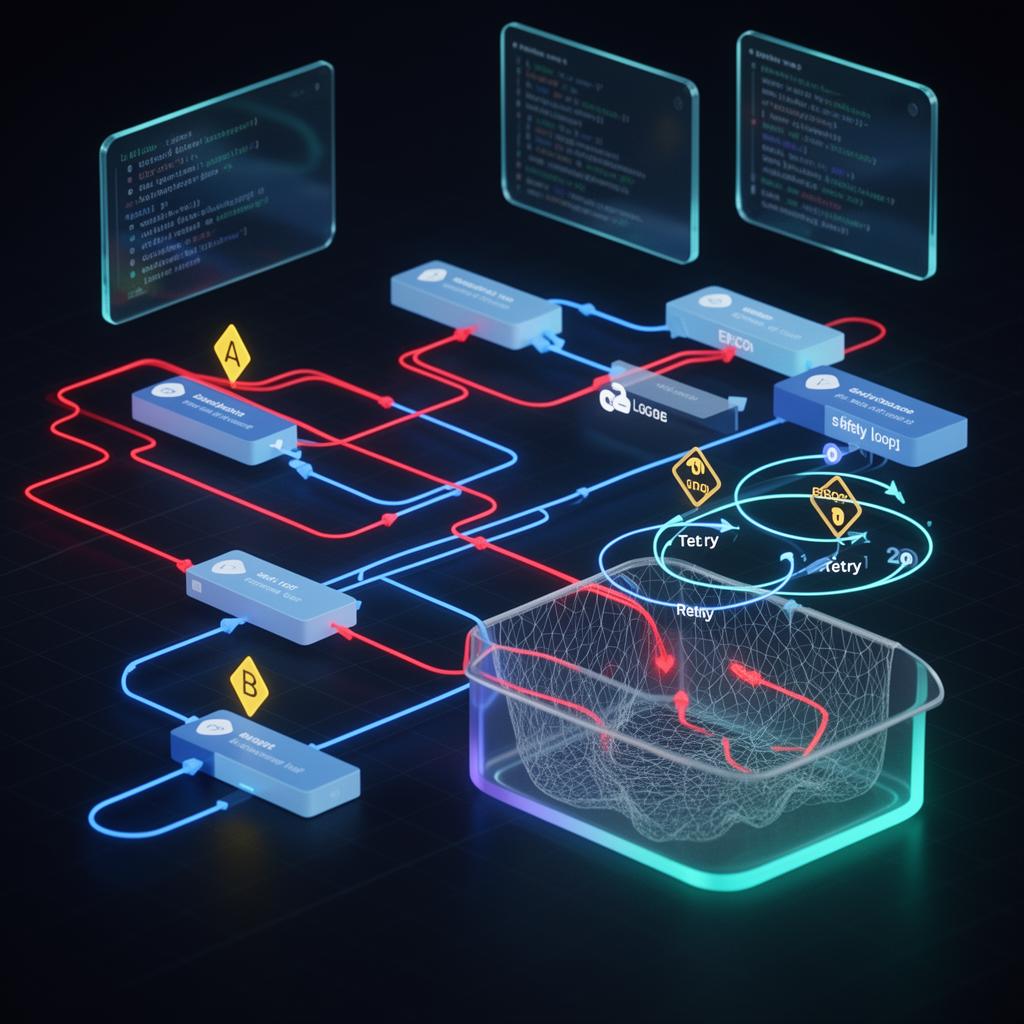

- Error Handling & Retry Strategies

- Security & Secrets Management in make.com

- Performance & Operations Optimization

- Make Module Migrator: monday.com V1 to V2

- n8n Best Practices Guide

We Build Production Monitoring Setups

As a make.com Certified Partner, we design observability stacks that fit your team and budget – from a 3-scenario heartbeat setup to a Datadog SLA dashboard for regulated industries. Including runbooks and onboarding for your ops team.

Make Mastery Series

3 of 6 read · 50%Six articles that take you from first scenarios to production-grade, secure, and performant automations.

- PART 1Unread

make.com Automation – The Ultimate Guide

Intro, comparison with Zapier & n8n, 5 use cases.

Read - PART 2Read

Error Handling & Retry Strategies

Resume, Rollback, Commit, Break – with interactive decision tree.

Read - PART 3ReadHere

Monitoring & Observability

Native dashboards + Better Stack heartbeats + Datadog deep dive.

- PART 4Read

Module Migrator: monday.com V1 → V2

Mandatory migration before May 1, 2026 – step-by-step guide.

Read - PART 5Unread

Security & Secrets Management

Connections, webhooks, IP whitelisting & vault patterns for production setups.

Read - PART 6Unread

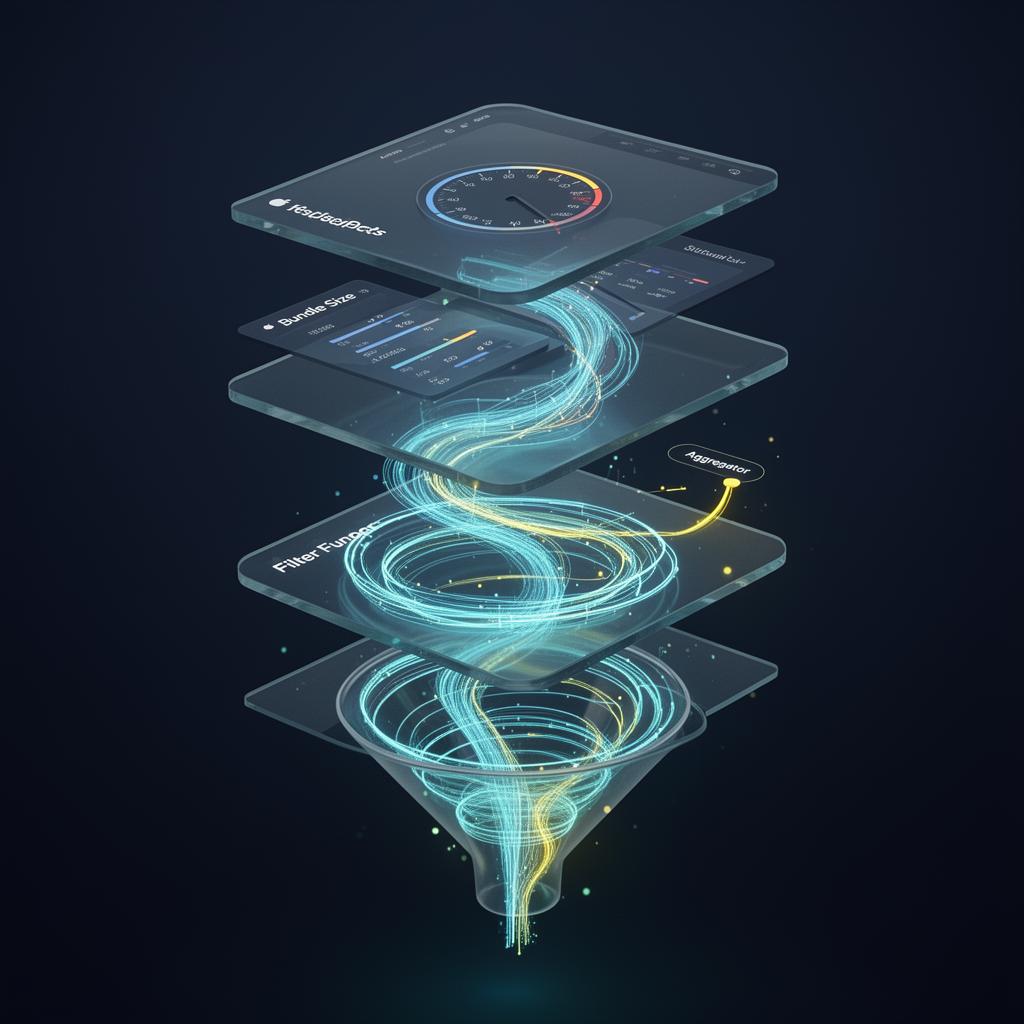

Performance & Operations Optimization

Bundle size, filter order, aggregators & sub-scenarios – 40–70% fewer ops.

Read

Reading progress is stored locally in your browser (localStorage).